With scientists mapping our neurons in ever greater detail, and companies like Google claiming they’re close to creating human-level artificial intelligence, the gap between brain and machine seems to be shrinking — throwing the question of consciousness, one of the great philosophical mysteries, back into the heart of scientific debate. Will the human mind — that ineffable tangle of private, first-person experiences — soon be shown to have a purely physical explanation? The neuroscientist Steven Novella certainly thinks so: ‘The evidence for the brain as the sole cause of the mind is, in my opinion, overwhelming.’

Elon Musk agrees: ‘Consciousness is a physical phenomenon, in my view’. Google’s Ray Kurzweil puts it even more bluntly: ‘A person is a mind file. A person is a software program.’

If these guys are correct, the ramifications are huge. Not only would it resolve, in a snap, a conundrum that’s troubled mankind for millennia — it would also pave the way for an entirely new episode in human history: minds uploaded to computers and all. And then there are the ethical implications. If consciousness arises naturally in physical systems, might even today’s artificial neural networks already be, as OpenAI’s Ilya Sutskever has speculated, ‘slightly conscious’? What, then, are our moral obligations towards them?

Materialists aren’t making these claims against a neutral backdrop. The challenges facing a purely physical explanation of consciousness are legion and well-rehearsed. How could our capacity for abstract thought — mathematical and metaphysical reasoning — have evolved by blind physical processes? Why, indeed, did we need to evolve consciousness at all, when a biological automaton with no internal experiences could have flourished just as well? Why, if the mind is the result of billions of discrete physical processes, do our experiences seem so unified? Most importantly, how do brain signals, those purely physical sparks inside this walnut-shaped sponge, magically puff into the rich, qualitative feeling of sounds and smells and sensations? None of these questions is necessarily insurmountable, but they certainly require something more substantial than what the philosopher David Chalmers calls ‘don’t-have-a-clue-materialism’ — the blind assumption that, even if we don’t yet understand how, the mysterious phenomenon of private, first-person experience must, in the end, just be reducible to physical facts. So what’s the evidence?

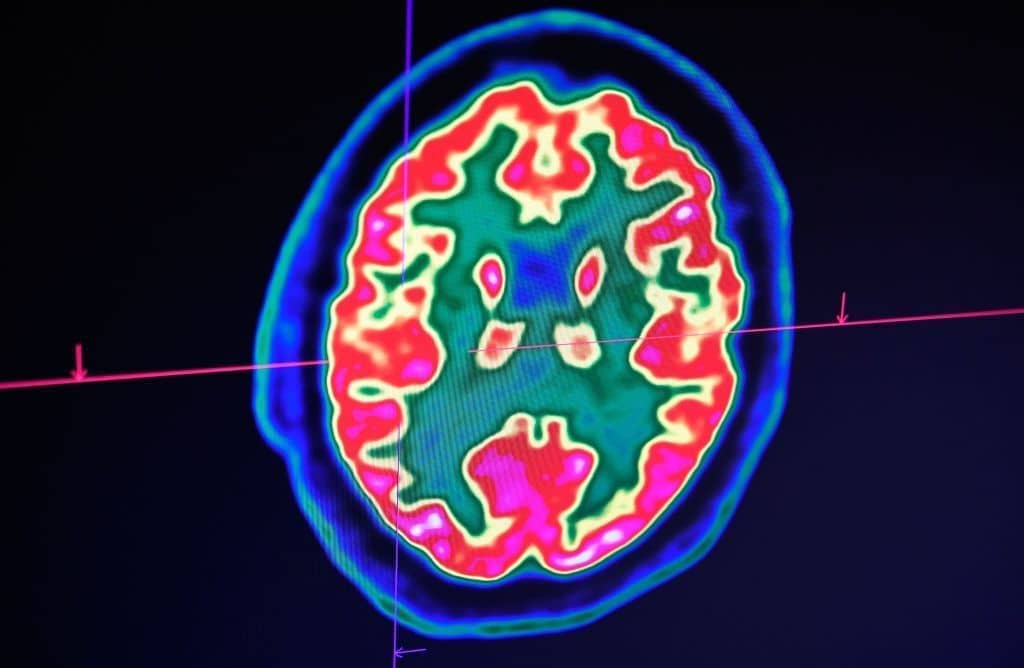

The last few years have seen a number of remarkable neuroscientific breakthroughs. In one study, scientists managed to communicate with a paralysed patient simply by asking him to imagine handwriting his thoughts. When he did so, brain implants recorded electric signals in his motor cortex, which artificial intelligence subsequently decoded with 94 per cent accuracy. In another, scientists tracked the ‘progress of a thought through the brain’: participants were asked to think of an antonym of a particular word, and electrodes planted on the cortex revealed how each step of the process — stimulus perception, word selection, and response — was ‘passed around’ to different parts of the brain. And in one landmark study, scientists claimed finally to have located the three specific areas of the brain — those linked to ‘arousal’ and ‘awareness’ — involved in the formation of consciousness.

This is all undeniably fascinating. But none of it gets us a jot closer to understanding how the brain activity we’re recording — and, in the case of the paralysed patient, harnessing for practical benefit — actually turns into first-person subjective experiences. At best, it shows that certain bits of grey matter are linked to certain kinds of conscious experience — but that’s no more than anybody, even the loftiest, magic-mushroom-popping mystic, would expect. Even the study that Steven Novella claims ‘completely destroys any notion… that mental function exists somehow outside of or separate from the biological functioning of the brain’ only shows that mice need certain bits of their brains to be functioning properly if they are to solve puzzles. Oh.

This isn’t surprising. It’s been three hundred years now since Gottfried Leibniz made the fundamental and obvious point that, if you could inflate a human brain to the size of a large building and step inside, you still wouldn’t be able to ‘locate’ within it any subjective perceptions. And even with the benefit of modern science, nobody since has come close to answering how you ever could find them — even theoretically. To paraphrase Nietzsche, we have described in ever more detail, and still explained nothing.

Take, for instance, perhaps the most popular scientific ‘theory of consciousness’ currently going: Integrated Information Theory (IIT). IIT begins with an interesting observation: that consciousness seems to have little, if anything, to do with the number of neurons in a given brain region. The cerebellum, for instance, which accounts for 69 billion of the brain’s 86 billion neurons, doesn’t play any significant role in conscious experience at all, while the much smaller cerebrum does. So what makes the difference? According to Giulio Tononi, the mastermind behind IIT, it’s that the neurons in the cerebrum are far more interconnected. From this, Tononi concludes that what produces consciousness is the amount of ‘integrated information’ in a system — something he believes can be calculated with mathematical precision, and that he expresses with the Greek letter Φ (Phi).

There are early signs that IIT fits the empirical data pretty well — correlating with the levels of consciousness we seem to experience in deep sleep, epileptic seizures, and comas. But what it doesn’t do is explain anything at all about how integrated information actually produces consciousness. As the computer scientist Scott Aaronson points out, the theory might as well simply be saying that, if you created a sufficiently complex system, ‘God would notice its large Φ-value and generously bequeath it a soul.’

At this point, the brain — mind, consciousness, self, whatever it is — simply starts to melt

The neuroscientists Aaron Schurger and Michael Graziano argue, in fact, that IIT doesn’t really qualify as a theory at all. Rather, it’s a ‘proposed law’ of consciousness: a statement of what ‘just happens’ to happen in the brain when we’re conscious, without our really knowing why. As they put it:

We arrive at a chasm where we say ‘and then consciousness happens’ in order to magically jump over the explanatory gap. Virtually all accounts of consciousness have this in common. They walk you to the edge (from different angles) and then somehow you find yourself on the other side, without understanding how you got there.

As Schurger and Graziano readily acknowledge, Tononi himself doesn’t claim to have explained how we get across the chasm. His focus, like most neuroscientists working on the problem of consciousness today, is simply to look for ‘neural correlates of consciousness’ — to build up as clear a picture as we can about what’s going on, scientifically speaking, on this side of the rift.

But is this really as ‘neutral’ an approach as it seems? Doesn’t the very decision to set aside — to demarcate, effectively, as scientifically off-limits — the question of how, at the very last moment, physical brain activity suddenly, somehow bursts into full-blown, technicolour subjective experience, implicitly accept a ‘dualistic’ picture of reality? Doesn’t it ultimately concede that grey matter and consciousness are of fundamentally different — qualitatively different — natures?

That’s the case made by Riccardo Manzotti and Paolo Moderato in their paper ‘Neuroscience: Dualism in Disguise’. Neutrality, they argue, is impossible: even a ‘description’ of how the brain ‘part’ of consciousness works, if it is to tell us anything meaningful at all, invariably needs to make reference to some broader theory of how the whole thing fits together. To maintain the air of respectability, neuroscientists, they claim, invent an ‘intermediate stage’ — in this case, ‘information’ — that seems to be describing something scientific, but is, in fact, simply acting as a ‘respectable sounding’ placeholder for the final metaphysical leap from brain to mind. The whole hypothesis of IIT, they conclude, is ‘tantamount to assuming the standard physical world and, on top of it, a level of information floating above. This is full-fledged dualism’.

In fact, the ‘implicit assumptions adopted by most neuroscientists invariably lead to some sort of dualistic framework’. Collapsing the Venn diagram between brain and mind into a single, perfect circle, it seems, is a lot harder than we thought.

Whether neuroscientists admit it or not, though, this unshakeable qualitative distinction between matter and experience is almost certainly why ‘neural correlates’ won’t give us the detailed physical ‘map’ of consciousness they’re after. Consider an analogy some scientists like to use: that the relationship between consciousness and neurons is like that between gravity and maths. We don’t understand why gravity pulls bodies together — it’s simply a ‘brute fact’ that it does — but maths can nonetheless predict with incredible accuracy exactly what does happen. Similarly, we don’t understand why brain activity causes consciousness — it’s simply a ‘brute fact’ that it does — but neuroscience can nonetheless allow us to make detailed predictions about what conscious experiences are playing out inside somebody’s mind.

But this is a fundamental misunderstanding. Maths and gravity both work in a ‘linear’ manner — bump up the numbers in the formula (a body’s mass, say) and you get a proportionate rise in the gravitational force. Consciousness simply isn’t like that. We don’t have any idea how you divide up the unity of conscious experience into quantifiable parts. Even ‘simple’ thoughts come to us fully formed: it’s impossible to imagine, as the physicist Stephen M. Barr has pointed out, having 37 per cent of the thought of the number two.

That isn’t to say, of course, that there aren’t obviously strong, if still fuzzy, links between certain parts of the brain and certain kinds of conscious sensation. But to expect this to resolve into an infinitesimally detailed, one-to-one map of the mind — where every ‘discrete’ thought is represented by a discrete physical counterpart — seems wildly naive.

But what if we could… just explain consciousness away? That’s the approach of a particularly radical band of materialists known as ‘illusionists’. Well aware of the implicit dualism of their materialist peers, illusionists believe the only way to eliminate the explanatory gap between brain and mind is simply to deny the mind exists — to argue that it only appears to us that it does.

This seems a self-evidently self-defeating position. As the writer David Bentley Hart puts it, ‘the illusion of consciousness would have to be the consciousness of an illusion, so any denial of the reality of consciousness is essentially gibberish’. The philosopher Galen Strawson puts it less charitably, calling illusionism ‘the silliest claim ever made’.

We’re left, then, with two awkward, if ultimately commonsensical, facts: that the subjective experience of consciousness is real; and that it is of an entirely different qualitative nature to the physical facts of the brain. So what now?

The first step is to broaden our conception of science. As the philosopher Philip Goff argues, modern science really comes to us in an artificially simplified form — blind, by design, to any parts of reality that cannot be expressed in maths. This isn’t, as we’ve since tricked ourselves into believing, because the world itself is wholly quantitative, but because the father of modern science, Galileo, made the deliberate decision to set aside questions of a qualitative nature — including those concerning consciousness — so that our investigations of the material world could proceed more efficiently.

It was a ruthlessly effective move, but it was never intended, Goff argues, to be a statement about the one and only true nature of reality. If Galileo were here today, Goff says, he wouldn’t be surprised in the slightest that we hadn’t solved consciousness using physical science — indeed, he’d think it was absurd we were even trying. As Manzotti and Moderato put it, it’s ‘like declaring that there are no forces acting at a distance and then trying to explain gravity’.

So what would an expanded scientific approach to consciousness even look like?

One increasingly popular, albeit controversial, theory is to think of the brain not as the source of consciousness, but rather as a filter or transmitter by which consciousness enters the world. This concept goes back at least as far as William James, who believed consciousness was a fundamental background feature of the universe — the ‘genuine matter of reality’ — that breaks ‘through our several brains into this world in all sorts of restricted forms, and with all the imperfections and queernesses that characterise our finite individualities here below.’

I first came across the idea via the evolutionary biologist Simon Conway Morris, who has suggested that the brain doesn’t ‘produce’ consciousness, but ‘encounters’ it — and that this ‘discovery’ of consciousness is a way of the universe becoming self-aware (the physicist Paul Davies has argued something similar, as has, from an atheist perspective, the philosopher Thomas Nagel). This idea of the brain as an ‘antenna’ has also been proposed, albeit tentatively, by the neuroscientist Paul Nunez and the former CERN scientist Bernardo Kastrup.

To be clear, such theories are not altogether popular among neuroscientists — one I spoke to called them outright pseudoscience. But proponents argue that many empirical phenomena — including some that are hard to explain in conventional neuroscientific terms — are actually more elegantly accounted for if you treat the brain as a carrier, not a source, of consciousness.

Conway Morris gives the examples of hydrocephalus — a well-reported phenomenon where patients appear to be missing as much as 90 per cent of their brains, and yet, in some cases at least, function almost entirely normally — and ‘terminal lucidity’, where senile or brain-damaged people appear suddenly to return to full, lucid consciousness in the hours before they die. The German psychologist Michael Nahm, like James before him, also thinks that fringe paranormal phenomena like near-death experiences and ‘reincarnation episodes’ could be brought into the mainstream if we took the ‘filter’ approach.

I get why this stuff makes materialist neuroscientists squeamish — among other things, it seems incredibly difficult to test. But that, unfortunately, appears to be the way with consciousness. And hydrocephalus and terminal lucidity, at least, have the benefit of being ‘externally verifiable’ phenomena (that is, you can’t just fake a massive cavity in your brain, nor, if you have Alzheimer’s, can you simply pretend you don’t). Perhaps a more conventional explanation for both will arise, but I see no particular harm in keeping an open mind.

Another, not altogether unrelated, theory having a renaissance at the moment is panpsychism — the view that consciousness is a fundamental part of all physical reality, not just of complex systems like the brain. Panpsychism is an ancient idea, held widely in pre-Socratic Greece, and adopted by numerous other philosophers since. But it blossomed in the early twentieth century, when the British astronomer Arthur Eddington, the first man to verify Einstein’s general theory of relativity empirically, put it at the heart of his scientific picture of the world — arguing, essentially, that basic physical properties like mass and energy were outward manifestations of matter’s even more fundamental nature: consciousness.

It’s a subtle, somewhat counterintuitive view. But contemporary panpsychists argue that their ideas actually fit better with modern science than materialism does.

The reason lies in the development of quantum theory over the last century. At the heart of quantum mechanics is the bewildering ‘observer effect’ — the fact, so it seems, that certain quantum states (whether an electron functions as a particle or a wave, for instance) only resolve themselves one way or the other when a conscious mind actually observes them. If true (and most quantum theorists believe it is), this spells disaster for the mechanistic view of reality that sees mind as mere superfluous fluff — appearing, as it does, to weave consciousness intimately into the fundamental laws of physics. The early quantum physicists didn’t mince their words — as Max Planck wrote:

I regard consciousness as fundamental. I regard matter as derivative from consciousness. We cannot get behind consciousness. Everything that we talk about, everything that we regard as existing, postulates consciousness.

Exactly what we conclude from this is, needless to say, hotly contested. The mathematician Roger Penrose has suggested that consciousness arises inside microtubules, minuscule structures bundled up inside our neurons, which ‘lock’ quantum fluctuations in a stable state for long enough, and in large enough quantities, for something like coherent subjective experience to appear. The quantum physicist Henry Stapp argues that the entire physical world is a structure of ‘tendencies’ or ‘probabilities’ within a universal, transpersonal mind. The theoretical physicist Lee Smolin says that space itself is an illusion, and that the seeming ‘dimensionality’ of reality consists, ultimately, only of relationships between subjective perspectives.

At this point, the brain — mind, consciousness, self, whatever it is — simply starts to melt. And all the subtle variants of anti-materialism out there — dualism, panpsychism, idealism — all begin to look like the same basic argument churned up in a blender and spat out in different ways: that materialism, when you really press it to explain how it’s possible for the human brain to grapple with ideas like this in the first place, seems like the most absurd theory of them all. The ‘mind arises from the wetware of the brain’? Pull the other one.

Comments