The Sage committee was set up as a pool of experts on tap to advise government. During the pandemic, it mutated into something different: a group whose advice ended up advocating long lockdowns. Its regular meetings have now been discontinued, with questions being asked in No. 10 about whether it’s time to disband Sage and set up a new structure – in the same way that Public Health England was reformed and became the UK Health Security Agency. There will be plenty of lessons to learn. But we might not have much time to learn them: a new variant or (given the growth of genomic sequencing) a new pathogen could come along at any time.

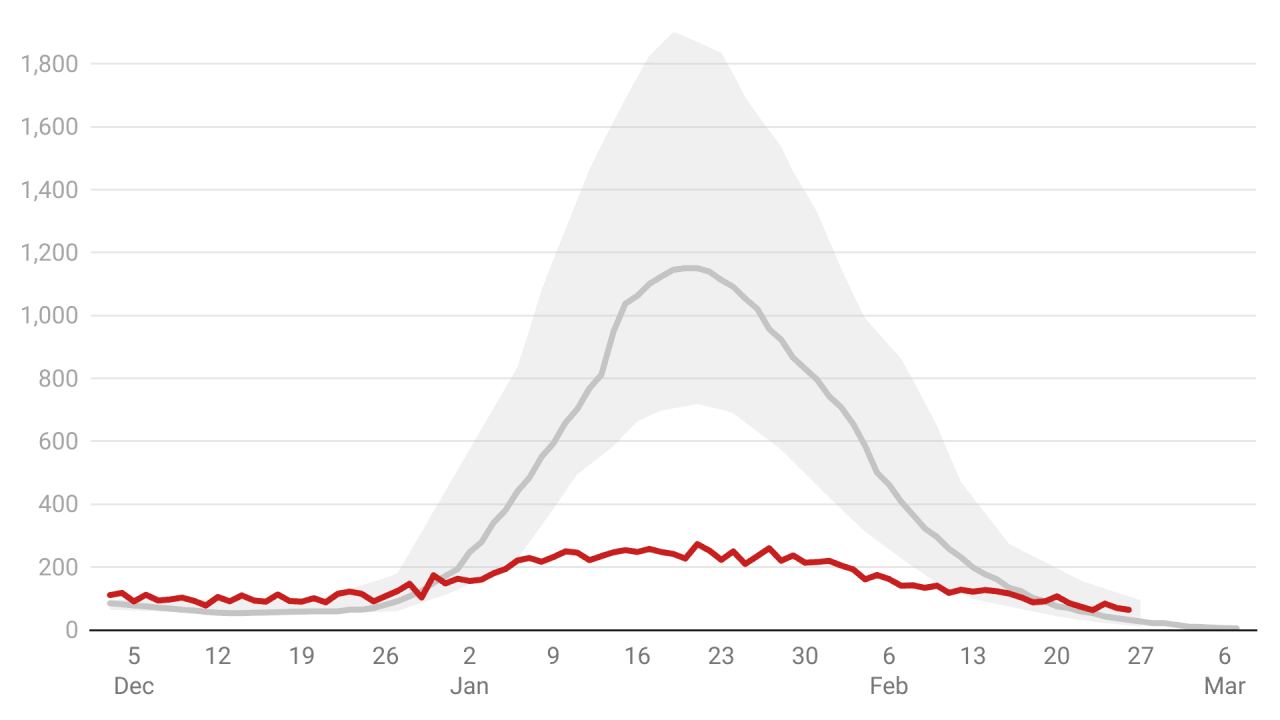

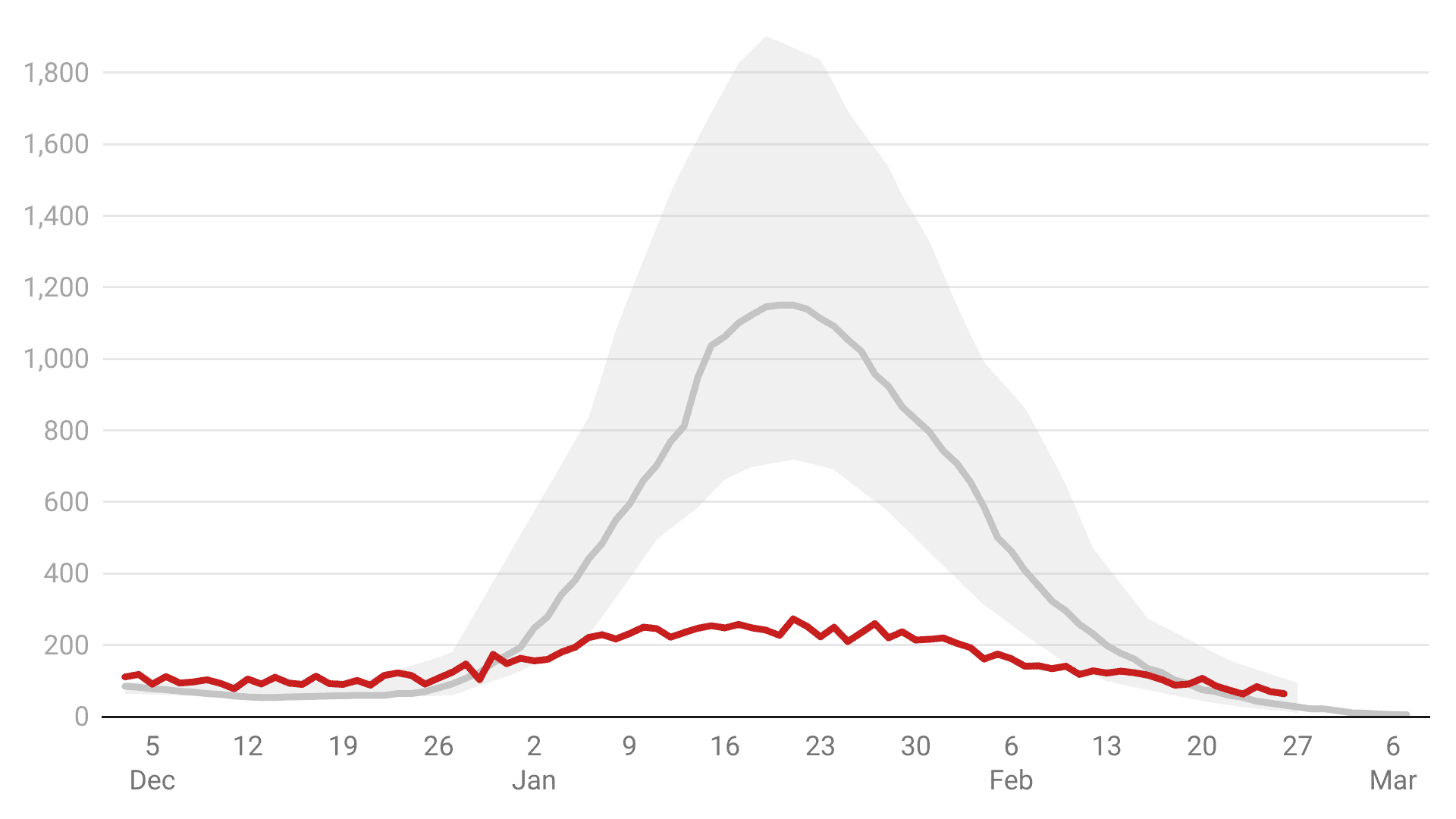

This matters. Failure to identify past mistakes will guarantee that they are repeated – and that’s why the evidence given by Professor Graham Medley, chair of the Sage modellers, to the science and technology select committee matters. The figures his team collated suggested that Omicron would kill between 600 to 6,000 people a day – as worked out, fatalities peaked at 273 on 21 January. The cabinet faced immense pressure to lockdown. As is now well known, the models were wildly out.

1. ‘At variance to reality’. Professor Medley said he was ‘happy to explain why the models had been at variance to reality.’ Importantly, he accepts the failure.

2. Medley still maintains better data was not available. But it was. By 15 December, when Sage’s 600 to 6,000 death figure was printed, JP Morgan used South African data to (correctly) model that hospitalisations would peak at 1,500. The select committee missed a trick by not calling in JP Morgan and asking them to explain why its 13 December note was more accurate than anything that Sage provided to ministers. Chris Whitty is still saying that by 15 December everything we knew about Omicron was ‘bad’. That’s factually untrue and this needs more scrutiny. Was the South African real-world data not being shown to him? if so, how did the UK system fail?

Using new South African data on Omicron severity and length of hospital stay, JP Morgan was able to conclude on 13 December that Omicron hospitalisation was such that the whole wave would be ‘manageable without further restrictions.’ So it was to prove.

Did private-sector discipline and forecasting methods allow for greater accuracy and faster error-correction than the Sage system? And should independent models (like JP Morgan’s) be presented to ministers as a matter of routine? HM Treasury regularly collects independent forecasts for the economy, for example. The growth of independent epidemiological modelling should surely be factored in for future lockdown decisions.

As structured, the Sage system grants huge power to those who control the (anonymised) process of commissioning studies and selecting how those studies are presented to policymakers.

3. Prof Medley suggested he believed Sage’s job is to be gloomy. Previously modellers have denied that they have a negativity bias. But Medley seemed to concede that they do. It wasn’t because they wanted to push a particular policy such as lockdown, but because they were concerned at a government’s reaction if they underpredicted: ‘The worst thing for me would be for the government to say, “Why didn’t you tell us it would be that bad?” So, inevitably, we’re always going to have a worst case above reality.’

He also said that ‘hopefully the best-case scenario looks better than reality, but in this case it didn’t.’

4. It was ‘generation time’, not just severity. As has been reported before, the generation time played a key factor in the mildness of the Omicron wave. The generation time is the time between a person becoming infected and then passing the virus on to someone else. The shorter this time is, the closer that variant’s R number is to one. The closer the R number is the one, the greater effect restrictions and behaviour change can have.

The New Year Sage minutes revealed that modellers believed the generation time was shorter, and this played a large factor in the overestimations in their models – which had assumed the same generation time as the Delta strain. Now Professor Medley says this was always the hunch: ‘we had a suspicion that the virus had a different generation time’. Crucially, he said, this meant there wasn’t necessarily a link between the ‘rapid growth rate’ and high transmissibility’.

If this was suspected, why wasn’t it included in the original models?

5. Why did Denmark get it right? Not all countries had such failures in predicting how the Omicron wave would pan out. Denmark’s Expert Group for Mathematical Modelling produced scenarios for Omicron hospitalisations that mapped well to reality.

Dr Camilla Holten-Møller – who chairs the Danish modelling group – also gave evidence to the select committee hearing. She explained that they were very conscious to factor in behaviour change into their models: when cases reached a certain level in a local area, people would mix less. So the model was designed to reflect that. Sage didn’t do this.

6. Sage’s great blind spot: human behaviour. Medley said it’s almost impossible to predict human behaviour. ‘You’d make a fortune on the stock exchange [if you could]’, he said. But this misses a point. You could make some pretty safe assumptions, based on known and well-documented evidence of how people react in a high-information democracy when pandemics strike. Sage have had the CoMix survey, and Google mobility data has been freely available. The ubiquity of mobile phones means that Google data is now very good at showing how (for example) Swedes locked themselves down without being told to – and that Brits had done so too before lockdown was enforced.

Failure to factor this in was perhaps the biggest single flaw in lockdown logic. Can you predict this precisely? No. But is it sensible to come up with modelling that assumes that without restrictions people would carry on as if there was no virus? Of course not. Medley went on to say that the ‘pingdemic’ in summer was ‘by far the most effective three days in reduction of transmission that we’ve seen throughout the whole epidemic. Much more effective than any of the lockdowns’. If that was known, surely it could have been factored into the Omicron models.

7. Even Sage ask: where was the economic modelling? Professor Medley said he knew lockdown would have deep social and economic concerns and thought that someone else would model those, judging the whole situation alongside his:

My suspicion and my hope is that within government they use the results of the modelling and they use the outputs of those scenarios in further models and further risk analysis to be able to come up with and include any economic information – which we don’t see – to come up with a support for the decision that’s actually made.

Former cabinet secretary Lord O’Donnell made the same point: why weren’t Sage’s reports fed into a higher committee that also looked at the social and economic impact of restrictions? This needs to be rectified.

8. Did Dr Raghib Ali save Christmas? The select committee was keen to find out why the government correctly made the decision not to lockdown. A later witness, Dr Raghib Ali, a clinical epidemiologist at Cambridge university shed some interesting light on what happened. He had been giving private briefings to the cabinet through Education Secretary Nadhim Sahawi. At the end of October 2020 Dr Ali had been brought into No. 10 as part of a ‘red team’ to challenge Sage advice before the second lockdown. But this time things happened informally – having previously worked with Nadhim Zahawi when he was vaccines minister, the now Education Secretary called on his advice for Omicron: ‘he [Zahawi] asked my advice… ahead of the cabinet meeting on 20 December on my best estimate on what was likely to happen’. He went on to tell Zahawi: ‘the most likely scenario will be the best case scenario or better.’

it was informal conversations and briefings like these that gave cabinet ministers the confidence to challenge Sage’s Omicron models. Dr Ali told Zahawi that he didn’t think the worst case scenarios were credible and that the ranges given were too wide. The JP Morgan research was also being passed around cabinet members: both Jacob Rees-Mogg and Rishi Sunak are financiers and still read original research. Both were aware of the JP Morgan 1,500 hospitalisation-a-day figure. Friends of the Prime Minister say that he had, by then, seen enough Sage graphs to smell a rat and noticed that cases had peaked in Gauteng, the South African epicentre, and could not see why they should also do so in the UK.

These briefings – along with media coverage – acted as a ‘red team’ challenging Sage advice. But surely that should become a formal part of the process?

Sage won’t have regular Covid meetings anymore and will gather again only if the government requests it. No one doubts the professionalism and integrity of the scientists and academics who served on Sage: if it ended up with a bias, that would have been due to the way its research was handled by government. And if that advice was not weighed up against social and economic issues, as Professor Medley said he always thought it would, that’s on government – the scientists cannot be blamed.

For almost two years, lockdowns dominated British life. Did they make a bad situation worse? As evidence comes in from all around the world, it is crucial that facts are assembled and this question is answered. We still don’t know what went into these models because the codes have never been widely published: so error detection, right now, remains impossible. The head of the Office for Statistics Regulation Ed Humpherson told the select committee that this needs to change: to learn lessons, we need the modelling code. And in a democracy, we need a way of scrutinising models: to be crystal clear about the assumptions used, and how using different assumptions would change the picture.

Comments