It’s odd, when you think about it, that mathematics ever got going. We have no innate genius for numbers. Drop five stones on the ground, and most of us will see five stones without counting. Six stones are a challenge. Presented with seven stones, we will have to start grouping, tallying and making patterns.

This is arithmetic, ‘a kind of “symbol knitting”’ according to the maths researcher and sometime teacher Paul Lockhart, whose Arithmetic explains how counting systems evolved to facilitate communication and trade, and ended up watering (by no very obvious route) the metaphysical gardens of mathematics.

Lockhart shamelessly (and successfully) supplements the archeological record with invented number systems of his own. His three fictitious early peoples have decided to group numbers differently: in fours, in fives, and in sevens. Now watch as they try to communicate. It’s a charming conceit.

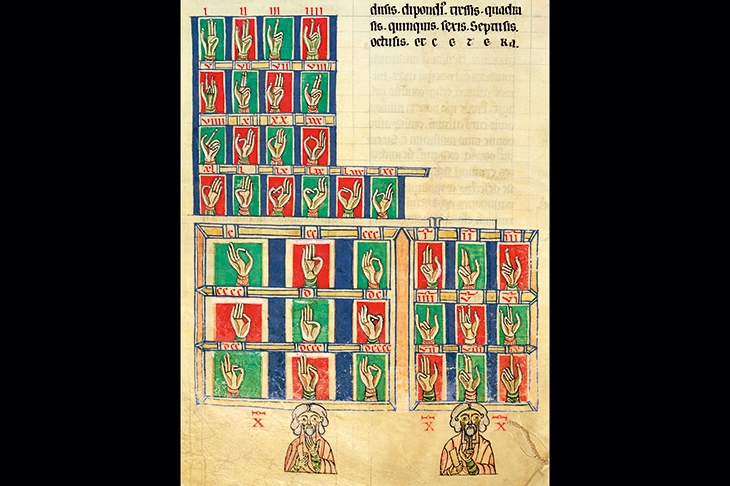

Arithmetic is supposed to be easy, acquired through play and practice rather than through the kind of pseudo-theoretical ponderings that blighted my 1970s-era state education. Lockhart has a lot of time for Roman numerals, an effortlessly simple base-ten system which features subgroup symbols like V (5), L (50) and D (500) to smooth things along. From glorified tallying systems like this, it’s but a short leap to the abacus.

It took an eye-watering six centuries for Hindu-Arabic numbers to catch on in Europe (via Fibonacci’s Liber Abaci of 1202). For most of us, abandoning intuitive tally marks and bead positions for a set of nine exotic squiggles and a dot (the forerunner of zero) is a lot of cost for an impossibly distant benefit. ‘You can get good at it if you want to,’ says Lockhart, in a fit of under-selling, ‘but it is no big deal either way.’

It took another four centuries for calculation to become a career, as sea-going powers of the late 18th century wrestled with the problems of navigation. In an effort to improve the accuracy of their logarithmic tables, French mathematicians broke the necessary calculations down into simple steps involving only addition and subtraction, assigning each step to human ‘computers’.

What was there about navigation that involved such effortful calculation? Blame a round earth: the moment we pass from figures bounded by straight lines or flat surfaces we run slap into all the problems of continuity and the mazes of irrational numbers. Pi, the ratio of a circle’s circumference to its diameter, is ugly enough in base 10 (3.1419…). But calculate pi in any base, and it churns out numbers forever. It cannot be expressed as a fraction of any whole number. Mathematics began when practical thinkers like Archimedes decided to ignore naysayers like Zeno (whose paradoxes were meant to bury mathematics, not to praise it) and deal with nonsenses like pi and the square root of 1.

How do such monstrosities yield such sensible results? Because mathematics is magical. Deal with it.

Ian Stewart deals with it rather well in Significant Figures, his hagiographical compendium of 25 great mathematicians’ lives. It’s easy to quibble. One of the criteria for Stewart’s selection was, he tells us, diversity. Like everybody else, he wants to have written Tom Stoppard’s Arcadia, championing (if necessary, inventing) some unsung heroine to enliven a male-dominated field. So he relegates Charles Babbage to Ada King’s little helper, then repents by quoting the opinion of Babbage’s biographer Anthony Hyman (perfectly justified, so far as I know) that ‘there is not a scrap of evidence that Ada ever attempted original mathematical work’. Well, that’s fashion for you.

In general, Stewart is the least modish of writers, delivering new scholarship on ancient Chinese and Indian mathematics to supplement a well-rehearsed body of knowledge about the western tradition. A prolific writer himself, Stewart is good at identifying the audiences for mathematics at different periods. The first recognisable algebra book, by Al-Khwarizmi, written in the first half of the 9th century, was commissioned for a popular audience. Western examples of popular form include Cardano’s Book on Games of Chance, published 1663. It was the discipline’s first foray into probability.

As a subject for writers, mathematics sits somewhere between physics and classical music. Like physics, it requires that readers acquire a theoretical minimum, without which nothing will make much sense. (Unmathematical readers should not start with Significant Figures; it is far too compressed.) At the same time, like classical music, mathematics will not stand too much radical reinterpretation, so that biography ends up playing a disconcertingly large role in the scholarship.

In his potted biographies Stewart supplements but makes no attempt to supersede Eric Temple Bell, whose history Men of Mathematics of 1937 remains canonical. This is wise: you wouldn’t remake Civilisation by ignoring Kenneth Clark. At the same time, one can’t help regretting the degree to which a Scottish-born mathematician and science fiction writer born in 1945 has had his limits set by the work of a Scottish-born mathematician and science fiction writer born in 1883. It can’t be helped. Mathematical results are not superseded. When the ancient Babylonians worked out how to solve quadratic equations, their result never became obsolete.

This is, I suspect, why both Lockhart and Stewart have each ended up writing good books about territories adjacent to the meat of mathematics. The difference is that Lockhart did this deliberately. Stewart simply ran out of room.

Comments